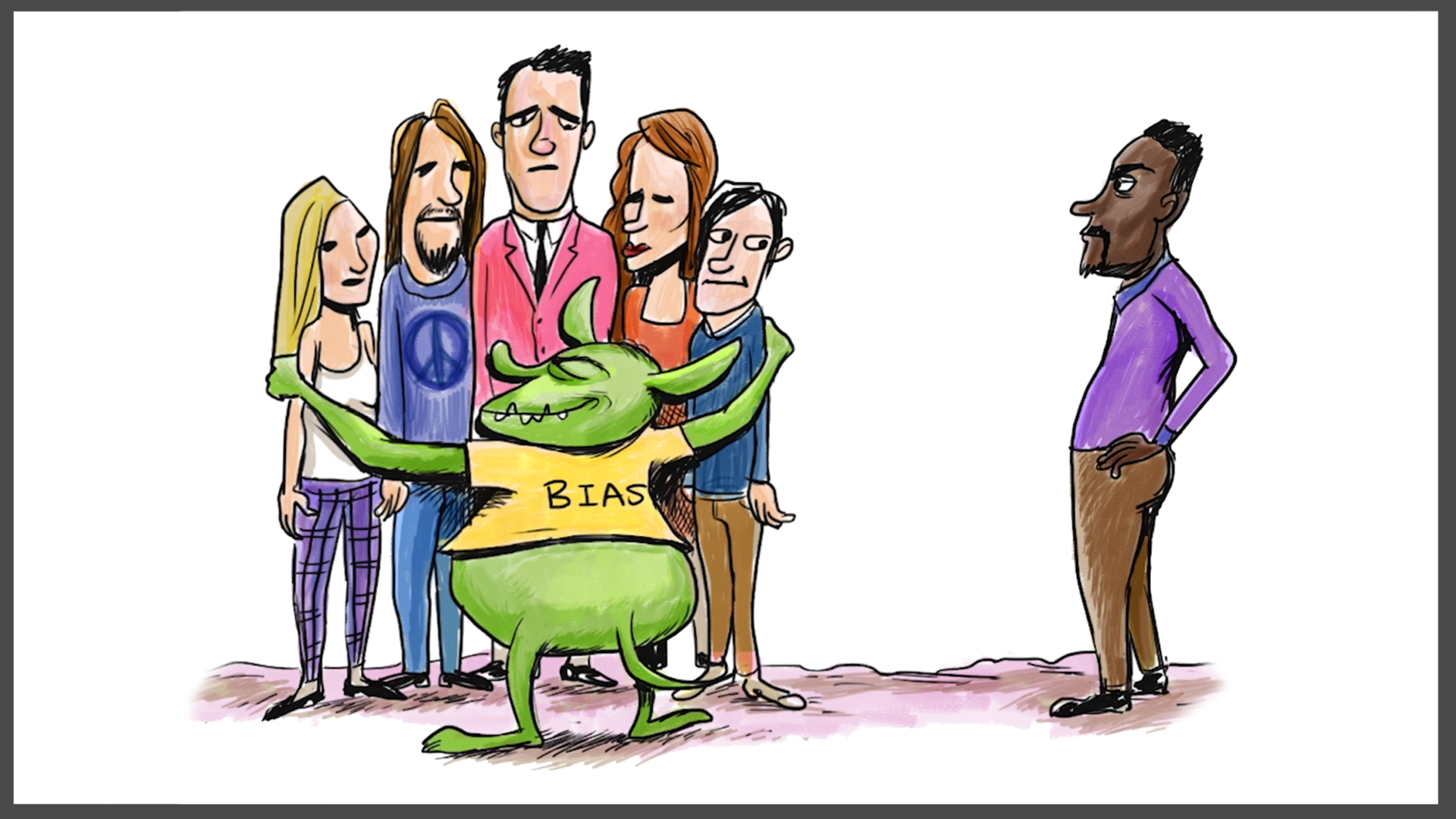

Understanding and addressing bias in artificial intelligence (AI) and machine learning (ML) systems is crucial for creating fair and ethical technologies. One of the most significant types of bias is explicit bias, which refers to the intentional or overt discrimination that is deliberately programmed into algorithms. This type of bias can have profound impacts on the outcomes of AI systems, affecting everything from hiring decisions to law enforcement. In this post, we will delve into the concept of explicit bias, provide explicit bias examples, and discuss strategies to mitigate its effects.

Understanding Explicit Bias

Explicit bias occurs when the bias is intentionally introduced into an AI system. This can happen through various means, such as biased training data, biased algorithms, or biased decision-making processes. Unlike implicit bias, which is often unintentional and subtle, explicit bias is deliberate and overt. It can lead to significant disparities in how different groups are treated by AI systems.

Explicit Bias Examples

To better understand explicit bias, let’s explore some explicit bias examples:

1. Facial Recognition Systems

Facial recognition systems have been criticized for their explicit bias against certain racial and ethnic groups. For instance, some facial recognition algorithms have been shown to have higher error rates for people of color, particularly Black and Asian individuals. This bias can be explicit if the algorithm is designed to prioritize accuracy for certain groups over others. For example, if a facial recognition system is trained primarily on images of white individuals, it may perform poorly when identifying people of color.

2. Hiring Algorithms

Hiring algorithms are another area where explicit bias can be prevalent. These algorithms are often used to screen resumes and select candidates for interviews. If the algorithm is programmed to favor candidates from certain backgrounds or with specific characteristics, it can lead to explicit bias. For example, an algorithm might be designed to prefer candidates from prestigious universities, which can disadvantage applicants from less well-known institutions.

3. Credit Scoring Systems

Credit scoring systems are used by financial institutions to assess the creditworthiness of individuals. Explicit bias can occur if the system is designed to discriminate against certain groups based on factors such as race, gender, or socioeconomic status. For instance, if a credit scoring algorithm is programmed to give lower scores to individuals from low-income neighborhoods, it can perpetuate economic inequality.

4. Law Enforcement Algorithms

Law enforcement agencies use algorithms to predict crime hotspots, assess the risk of recidivism, and make other critical decisions. Explicit bias can be introduced if these algorithms are designed to target specific groups. For example, if a predictive policing algorithm is programmed to focus on certain neighborhoods based on racial or ethnic demographics, it can lead to over-policing and disproportionate surveillance of those communities.

Impact of Explicit Bias

The impact of explicit bias in AI systems can be far-reaching and detrimental. It can lead to:

- Unfair Treatment: Individuals from certain groups may be unfairly targeted or excluded based on biased algorithms.

- Economic Inequality: Biased credit scoring and hiring algorithms can perpetuate economic disparities.

- Social Inequality: Explicit bias in law enforcement algorithms can exacerbate social inequalities and erode trust in institutions.

- Legal Consequences: Biased AI systems can result in legal challenges and regulatory scrutiny, damaging the reputation of organizations that deploy them.

Mitigating Explicit Bias

Addressing explicit bias in AI systems requires a multi-faceted approach. Here are some strategies to mitigate its effects:

1. Diverse and Inclusive Data

Ensuring that training data is diverse and inclusive is a crucial step in reducing explicit bias. This involves collecting data from a wide range of sources and ensuring that it represents the diversity of the population the AI system will serve. For example, facial recognition systems should be trained on images from various racial and ethnic groups to improve accuracy across different demographics.

2. Bias Audits

Conducting regular bias audits can help identify and address explicit bias in AI systems. These audits involve evaluating the performance of algorithms across different groups and identifying any disparities. Organizations should implement bias audits as part of their regular monitoring and evaluation processes to ensure that their AI systems are fair and unbiased.

3. Transparent Algorithms

Transparency in algorithm design and implementation is essential for mitigating explicit bias. Organizations should be transparent about how their algorithms work and the data they use. This includes providing clear documentation and explanations of the decision-making processes involved. Transparency can help build trust with users and stakeholders and facilitate the identification and correction of biases.

4. Ethical Guidelines

Developing and adhering to ethical guidelines can help prevent the introduction of explicit bias into AI systems. These guidelines should outline principles for fair and ethical AI development, including the importance of diversity, inclusion, and transparency. Organizations should also establish mechanisms for accountability and oversight to ensure that ethical guidelines are followed.

5. Stakeholder Engagement

Engaging with stakeholders, including affected communities, can provide valuable insights into the potential biases in AI systems. Organizations should involve stakeholders in the design, development, and evaluation of AI systems to ensure that their perspectives and concerns are addressed. This can help identify and mitigate explicit bias and improve the overall fairness and effectiveness of AI systems.

🔍 Note: It is important to note that mitigating explicit bias requires ongoing effort and vigilance. Organizations must continuously monitor and evaluate their AI systems to ensure that they remain fair and unbiased over time.

Case Studies

To further illustrate the impact of explicit bias and the strategies for mitigating it, let’s examine a couple of case studies:

Case Study 1: Amazon’s Hiring Algorithm

Amazon’s hiring algorithm faced criticism for explicit bias against women. The algorithm was trained on historical hiring data, which predominantly featured male candidates. As a result, the algorithm learned to favor male candidates over female candidates, leading to a biased hiring process. To address this issue, Amazon had to scrap the algorithm and start over, focusing on more diverse and inclusive data.

Case Study 2: COMPAS Risk Assessment Tool

The Correctional Offender Management Profiling for Alternative Sanctions (COMPAS) risk assessment tool used by the criminal justice system has been criticized for explicit bias against Black individuals. The tool was found to predict higher recidivism rates for Black defendants compared to white defendants, even when controlling for other factors. This bias led to disproportionate sentencing and surveillance of Black individuals. To mitigate this bias, the tool’s developers have been working on improving its algorithms and data sources to ensure more accurate and fair predictions.

In both cases, the explicit bias in the algorithms had significant impacts on the outcomes for affected individuals. Addressing these biases required a combination of diverse data, bias audits, transparency, ethical guidelines, and stakeholder engagement.

In conclusion, explicit bias in AI systems is a serious issue that can have far-reaching consequences. By understanding the nature of explicit bias, examining explicit bias examples, and implementing strategies to mitigate its effects, organizations can work towards creating fairer and more ethical AI technologies. It is essential to recognize that addressing explicit bias requires ongoing effort and vigilance, as well as a commitment to diversity, inclusion, and transparency. By taking these steps, we can ensure that AI systems serve the interests of all individuals and communities, promoting fairness and equality in the digital age.

Related Terms:

- explicit bias definition examples

- examples of explicit biases

- explicit or conscious bias

- everyday examples of explicit bias

- conscious vs explicit bias

- a person with explicit biases